Light Field Powered: First Smartphones with Holographic Displays Could Arrive within Two Years

Just a few years ago, mobile displays took a leap forward with increased pixel densities that ensure crisp images on realtively small screens. Today, most smartphones feature displays with up to 538 pixels per inch (ppi) – a resolution that is much higher than what the human eye can see. So what’s the next display innovation we can look forward to?

Just a few years ago, mobile displays took a leap forward with increased pixel densities that ensure crisp images on realtively small screens. Today, most smartphones feature displays with up to 538 pixels per inch (ppi) – a resolution that is much higher than what the human eye can see. So what’s the next display innovation we can look forward to?

In her recent article on IEEE Spectrum, Sarah Lewin introduced two companies that are working on making what she calls “holographic” light field displays (i.e. glasses-free 3D displays) a reality.

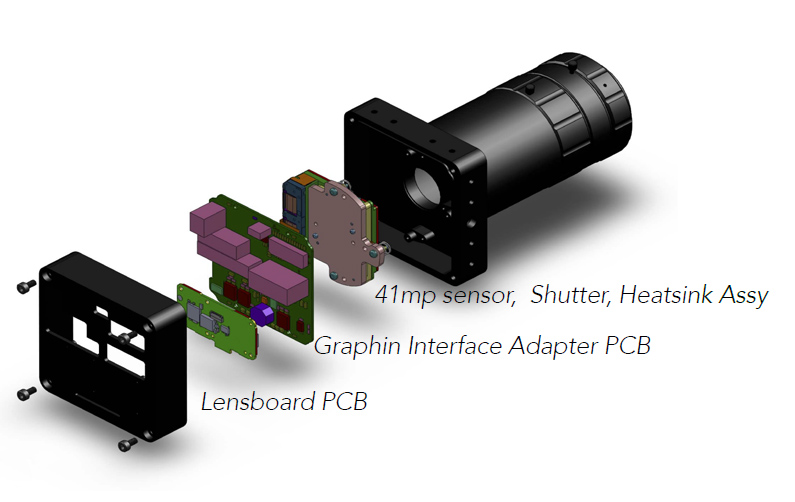

Ostendo Technologies recently presented the results of nine years’ work at the Display Week conference: An array of 4×2 Quantum Photonic Imager chips (each consisting of LEDs, image processors and embedded rendering software) plus microlens array form a 1 megapixel (1024x768px, XGA resolution) prototype display which sends out light not into every direction – like conventional displays do – but rather into very narrow, collimated angles of light. This enables the prototype to emit different images into different directions, producing about 2,500 different perspective views, so the image and motion displayed appear consistent regardless of the viewer’s position.

To cope with the computational load involved, each pixel (at a stunning 5-10 µm pixel pitch, or up to 5000 ppi) actually has its own dedicated image processor. According to NDTV, Ostendo is planning produce these 3D chips in the second half of 2015.

Each of the 1 million pixels on Ostendo’s little chip consists of a layer each of red, green, and blue micro-LEDs (or lasers, in some iterations) sitting on top of its own small silicon image processor. The pixels are between 5 and 10 micrometers on a side. By modulating the power to the individual layers, each pixel can send out any color of light in a thin, focused beam. Multiple vertical waveguides carry the light out from the layers and modulate its direction—although company representatives won’t specify exactly how—and an array of microlenses focus and direct the beam further. Having an image processor under each pixel saves power and lightens the overall computational load, which is considerable for complex images because they must be simultaneously rendered for viewing from thousands of different perspectives.

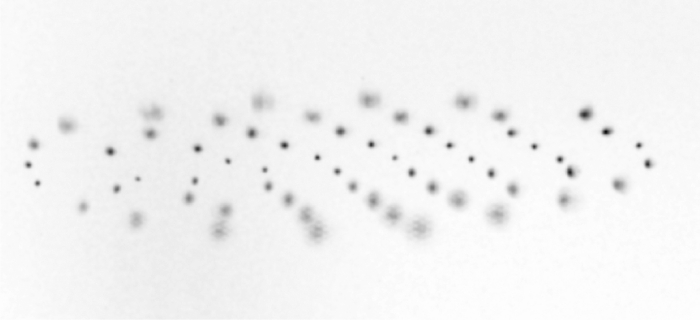

The second company, Leia, is actually a spin-off from the HP Labs Research Project that we’ve reported on last year. Their technology uses standard LEDs and nano-structures called “diffraction gratings” to emit light into up to 64 different angles, creating 64 perspective views.

That is significantly less than Ostendo’s prototype, but it’s much easier to integrate into current display technology and can be scaled up. Leia, too, plans to have a first commercial product out in 2015.

The HP team started with a standard LED backlight, in which light from LEDs arrayed along the side of a device are delivered through light guides to a plane behind a liquid crystal display. On top of the light guide, the team added a grid of diffraction gratings, each with one of 192 different combinations of pitches and orientations, to act as individual pixels. A portion of the light traveling through the waveguide exits through each of the gratings and is sent in a slightly different direction. By modulating the light going to each grating, the researchers produced a series of images each angled to produce a 3-D picture from a different point of view.

https://www.youtube.com/watch?v=4qko-AlbEoU

Both light field display technologies could make it into a mobile phone within two or three years, but there remains a lot of work to improve the technology to live up to current standards of 2D displays.

“Everybody wants to put 3-D on a smartphone,” says David Fattal, founder of Leia, a light-field display start-up. “And customers aren’t going to want to compromise between a holographic 3-D phone that has mediocre 2-D performance and a normal phone. They’re going to want the best of the best.”

company is a bunch of scam artists I’ve met and spoken with their CTO and CEO. Neither has any expertise in the area and seem a little naively over confident. Didn’t really speak about anything other than some canned answers about the performance which were so misleading I thought they had to know they were lying. Plus they’ve only had one color forever and their tri color display….. sure, if they could make them at hi yield you’d think theyd have shown off a bunch of them… no? has anyone actually seen this holographic projector or have they played stupid and let the confusion ensue between their projector and light field display, neither of which are holograms? materially misleading. would be pissed and throwing out the execs/mgmt if I was an investor in that company.