How the Lytro Camera sees the World: Raw Data of a LightField Sensor

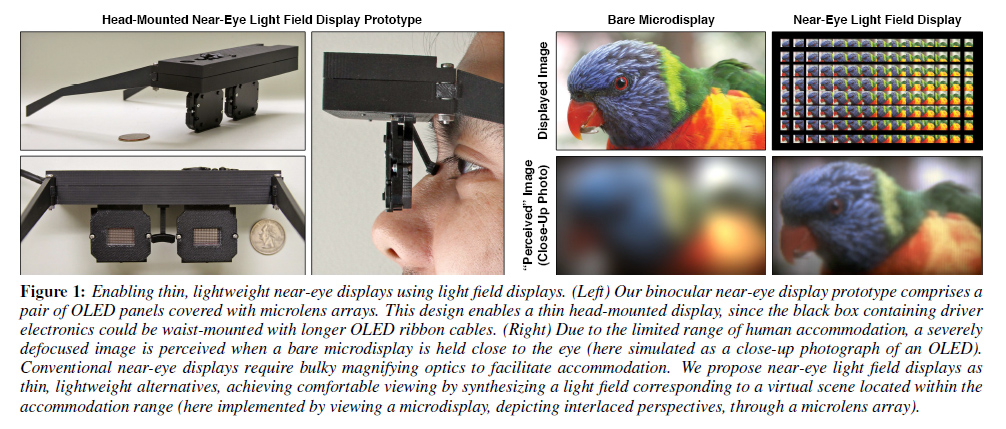

Lytro’s LightField Sensor consists of an ordinary CMOS imaging sensor, and a so-called microlens array mounted on top of it. Using this combination, it becomes possible to not only record a flat representation of a scene, but also the direction of individual light rays (using complex algorithms).

Lytro’s LightField Sensor consists of an ordinary CMOS imaging sensor, and a so-called microlens array mounted on top of it. Using this combination, it becomes possible to not only record a flat representation of a scene, but also the direction of individual light rays (using complex algorithms).

But what does that really mean, and what exactly does the sensor see?

The camera’s RAW data can be extracted using lfpsplitter (though it is currently lacking an update to work with the newest version of .lfp-files). Since the raw image contains 12-bit data – as opposed to the 8-bit data normally found in processed JPGs – it cannot be displayed in colour per se.

Flickr-User Corby Ziesman has taken the time to extract the RAW sensor data and converted it to a grayscale TIFF file. Then, he used a focus-stack of the JPG layers from the processed -stk.lfp file and superimposed it onto the TIFF-file, revealing what the image sensor actually records: A major picture that consists of about 100,000 sub-images that differ in minute details.

Here I extracted the JPEGs from the LFP file and focus stacked the images, then I extracted the sensor RAW data from the other LFP file and converted it to a TIFF and overlaid the color from the focus stacked image onto the grayscale sensor RAW image. This way you can see how the Lytro actually views the scene with the micro lens array over the sensor. You can even see individual hot pixels.

It is this picture that allows sophisticated algorithms to find and match individual light rays, trace them back

to their source, and create a three-dimensional representation of the scene. Following the light rays back to their original source also lets us virtually shift the focal plane of the camera (software refocus).

https://pictures.lytro.com/corbyz/pictures/52572

See the full version on Flickr: Actual Lytro micro lens array

Since there is no such thing as a “light ray”, except in poetry, I still believe the whole thing is a scam. So it takes multiple pictures at varying focal lengths and lets you select which one you want later. Big Deal. I’d like someone to run two tests: 1. take a photo through a window screen and 2. take a picture of a tape measure stretched from the camera straight out. See what happens. Can you focus on either the screen or the outside through the screen? Can you really focus on any point along the tape measure?

Mike, I don’t have a window screen picture at hand, but here’s a version of the measure tape:

The software obviously applies a fair bit of compression (otherwise every file would be about 20 MB and take minutes to load before being clickable), but we’ve seen demos of seamless refocus through entire pictures.