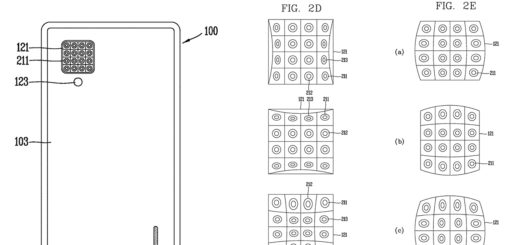

K|Lens presents first sample photos

After introducing their prototype light field lens last year, German startup has now released their first sample photos recorded with their prototype lens. The company showed off a sample image of spring flowers in...

Recent Comments