Lens Blur: Google Camera App for Android gets Refocus and Adjustable Depth of Field

Light field technology is gaining momentum in the mainstream, but we have yet to see the first smartphone featuring a Pelican Array Camera, Tesseract/Focii module, or something similar.

Light field technology is gaining momentum in the mainstream, but we have yet to see the first smartphone featuring a Pelican Array Camera, Tesseract/Focii module, or something similar.

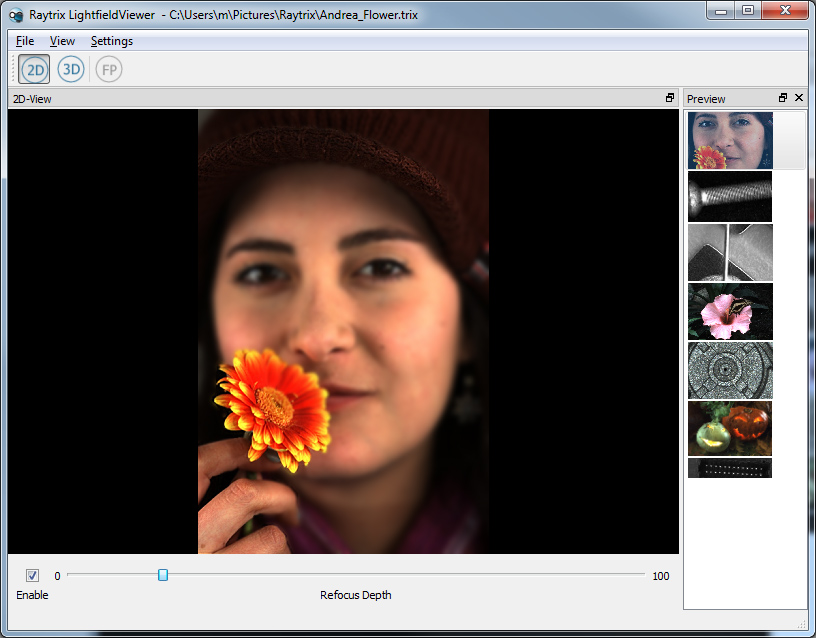

Meanwhile, more and more developers are using advanced software in conjunction with traditional camera modules to recreate one of the most popular features of the light field: software refocus.

The latest addition to the growing list of companies/developers recreating the refocus effect on mobile devices is none other than the mother of Android: Google. Introducing “Lens Blur”, the company has included their own version of software refocus into its all-new Google Camera app (free).

Lens Blur requires users to take a picture and do an “upward sweep” while keeping the subject centered. This allows the app to estimate depth in a picture (i.e. elements that change less during the sweep are farther away), which in turn enables us to perform software refocus (tap to focus), and even adjust the depth of field using a slider (i.e. virtual aperture).

However, in contrast to other solutions, interactive Lens Blur images are only available on the device, and exports/uploads are flat images. Update: Reader Tobias Jakobs tells us that depth data is actually stored in the picture’s EXIF data, and can be extracted/used by other software.

https://www.youtube.com/watch?v=4RJf9V85hHc

Popular features of Android’s stock camera app, such as Photosphere, were previously (mostly) limited to Nexus and Google Play devices, since manufacturers often replaced the app with their own software. By releasing Google Camera as a standalone-app on the Play Store, the company now enables all Android users running 4.4+ KitKat to use experiment with Lens Blur as well as Photosphere.

If you’re looking for some more information, Google explains how it works, and Android Police gives us a detailed hands-on report.

“However, in contrast to other solutions, interactive Lens Blur images are only available on the device, and exports/uploads are flat images.”

That is not right. The depth map is inside the EXIF data and you can extract it e.g. with exiftool. Here is my first experiment:

http://dablogter.blogspot.de/2014/04/googles-new-camera-app.html

Thanks for the clarification – we’ll update the article! Please keep us posted about your experiments! :)