Disney Research: A More Efficient Method for Reconstruction of Gigaray Light Fields

Today’s light field processing algorithms have mostly been tailored for relatively low image resolutions in the range of a few megapixels. That means, even with increasing sensor resolutions, light field technology will still be effectively limited by resolution. The analysis of light fields at high spatio-angular resolution, so-called “gigaray light fields“, remains a technological challenge due to the sheer computing power it requires.

Researchers at Disney Research in Zürich, Switzerland, have come up with a new, faster way of processing such light fields. Their secret: ignore some of today’s established practices in image-based reconstruction, and try something different.

Working with 100 individual standard DSLR pictures (21 megapixels each), Changil Kim and colleagues have devised a method that can deal with gigaray light fields on a standard GPU (graphics processing unit). Their algorithm is designed to selectively estimate depth for indivdual pixels rather than patches, and it first concentrates on object boundaries. Smooth reconstruction of interior regions is performed as a second step.

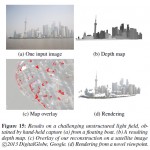

The algorithm is shown to work with both linear light fields (captured using a DSLR and camera dolly) and sets of images that were taken with a hand-held camera.

3D reconstruction is performed much faster compared to current methods, and stands out with a high level of detail and very precise silhouettes.

Why is Disney interested in light field technology? High-resolution 3D computer models of complex, real-life scenes have become one of the most important aspects of today’s movie production, and according to co-author Alexander Sorkine-Hornung, 3D modeling is only the beginning:

Three-dimensional models have become increasingly important for digitizing, visualizing and archiving the real world. In movie production, for instance, creating accurate 3D models of movie sets is often necessary for post-production tasks such as integrating real-world imagery with computer-generated effects.

“Our method could be used for applications such as automatic image segmentation, which would simplify background removal in detailed scenes. It also would be useful for image-based rendering, in which new 2D images are created by combining real images.”

Publication abstract:

This paper describes a method for scene reconstruction of complex, detailed environments from 3D light fields. Densely sampled light fields in the order of 10^9 light rays allow us to capture the real world in unparalleled detail, but efficiently processing this amount of data to generate an equally detailed reconstruction represents a significant challenge to existing algorithms.

We propose an algorithm that leverages coherence in massive light fields by breaking with a number of established practices in image-based reconstruction. Our algorithm first computes reliable depth estimates specifically around object boundaries instead of interior regions, by operating on individual light rays instead of image patches. More homogeneous interior regions are then processed in a fine-to-coarse procedure rather than the standard coarse-to-fine approaches. At no point in our method is any form of global optimization performed. This allows our algorithm to retain precise object contours while still ensuring smooth reconstructions in less detailed areas. While the core reconstruction method handles general unstructured input, we also introduce a sparse representation and a propagation scheme for reliable depth estimates which make our algorithm particularly effective for 3D input, enabling fast and memory efficient processing of “Gigaray light fields” on a standard GPU.

We show dense 3D reconstructions of highly detailed scenes, enabling applications such as automatic segmentation and image-based rendering, and provide an extensive evaluation and comparison to existing image-based reconstruction techniques.

More information: Kim C, Zimmer H, Pritch Y, Sorkine-Hornung A, Gross M (2013) Scene Reconstruction from High Spatio-Angular Resolution Light Fields. ACM Transactions on Graphics 32(4) (Proceedings of SIGGRAPH 2013)

1 Response